via Carnegie Mellon University

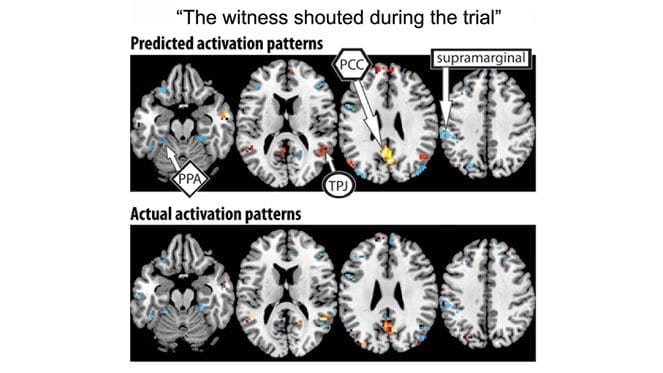

Carnegie Mellon University scientists can now use brain activation patterns to identify complex thoughts, such as, “The witness shouted during the trial.”

This latest research led by CMU’s Marcel Just builds on the pioneering use of machine learning algorithms with brain imaging technology to “mind read.” The findings indicate that the mind’s building blocks for constructing complex thoughts are formed by the brain’s various sub-systems and are not word-based. Published in Human Brain Mapping and funded by the Intelligence Advanced Research Projects Activity (IARPA), the study offers new evidence that the neural dimensions of concept representation are universal across people and languages.

“One of the big advances of the human brain was the ability to combine individual concepts into complex thoughts, to think not just of ‘bananas,’ but ‘I like to eat bananas in evening with my friends,'” said Just, the D.O. Hebb University Professor of Psychology in the Dietrich College of Humanities and Social Sciences. “We have finally developed a way to see thoughts of that complexity in the fMRI signal. The discovery of this correspondence between thoughts and brain activation patterns tells us what the thoughts are built of.”

Previous work by Just and his team showed that thoughts of familiar objects, like bananas or hammers, evoke activation patterns that involve the neural systems that we use to deal with those objects. For example, how you interact with a banana involves how you hold it, how you bite it and what it looks like.

The new study demonstrates that the brain’s coding of 240 complex events, sentences like the shouting during the trial scenario uses an alphabet of 42 meaning components, or neurally plausible semantic features, consisting of features, like person, setting, size, social interaction and physical action. Each type of information is processed in a different brain system—which is how the brain also processes the information for objects. By measuring the activation in each brain system, the program can tell what types of thoughts are being contemplated.

For seven adult participants, the researchers used a computational model to assess how the brain activation patterns for 239 sentences corresponded to the neurally plausible semantic features that characterized each sentence. Then the program was able to decode the features of the 240th left-out sentence. They went through leaving out each of the 240 sentences in turn, in what is called cross-validation.

The model was able to predict the features of the left-out sentence, with 87 percent accuracy, despite never being exposed to its activation before. It was also able to work in the other direction, to predict the activation pattern of a previously unseen sentence, knowing only its semantic features.

“Our method overcomes the unfortunate property of fMRI to smear together the signals emanating from brain events that occur close together in time, like the reading of two successive words in a sentence,” Just said. “This advance makes it possible for the first time to decode thoughts containing several concepts. That’s what most human thoughts are composed of.”

He added, “A next step might be to decode the general type of topic a person is thinking about, such as geology or skateboarding. We are on the way to making a map of all the types of knowledge in the brain.”

Learn more: Beyond Bananas: CMU Scientists Harness “Mind Reading” Technology to Decode Complex Thoughts

The Latest on: Mind reading technology

[google_news title=”” keyword=”mind reading technology” num_posts=”10″ blurb_length=”0″ show_thumb=”left”]

- Mind Technology (NASDAQ: MIND)on April 19, 2024 at 4:46 am

MIND Technology, Inc. engages in the provision of technology and solutions for exploration, survey and defense applications in oceanographic, hydrographic, defense, seismic and security industries.

- Mind-reading computers that can produce high-fidelity images from your thoughts alone are coming sooner than you thinkon April 19, 2024 at 12:41 am

M ind-reading computer systems that can produce high-fidelity images from your thoughts alone are coming. Are we a decade or less away from being able to think images into existence? Short answer: ...

- AI mind-reading: Unlikely, but still worrisomeon April 17, 2024 at 11:52 pm

Well, for real-time AI-powered mind-reading to be possible, we need to be able to identify precise, one-to-one correspondences between particular conscious mental states and brain states. And this may ...

- MIND Technology Inc MINDon April 16, 2024 at 5:01 pm

Morningstar Quantitative Ratings for Stocks are generated using an algorithm that compares companies that are not under analyst coverage to peer companies that do receive analyst-driven ratings ...

- Unraveling the Reality of Mind-Reading Technologyon April 16, 2024 at 1:01 am

In the past couple of years, neuroscience, and artificial intelligence (AI) developments have led to the emergence of the thinking that there could be mind-reading technology. From Neuralink’s brain ...

- In a future with more ‘mind reading,’ thanks to neurotech, we may need to rethink freedom of thoughton April 9, 2024 at 6:08 am

(The Conversation is an independent and nonprofit source of news, analysis and commentary from academic experts.) Parker Crutchfield, Western Michigan University (THE CONVERSATION) Socrates, the ...

- In a future with more ‘mind reading,’ thanks to neurotech, we may need to rethink freedom of thoughton April 9, 2024 at 4:02 am

These views may seem peculiar, but his central fear is a timeless one: that technology threatens thought ... protecting the mind isn’t nearly as easy as protecting bodies and property.

- Science Fiction Becomes Reality With AI-Powered Mind Reading; The Consequences of the Data Gold Rushon April 8, 2024 at 10:33 am

As tiny chips and artificial intelligence algorithms become more powerful, a possible future where people control computers just by thinking has become more realistic, according to Brian Otis, the ...

via Google News and Bing News